An -gram is a sequence of tokens (words). The classic Bag of Words (BoW) representation uses unigrams, meaning single words. The bag-of--grams is the generalization for different s.

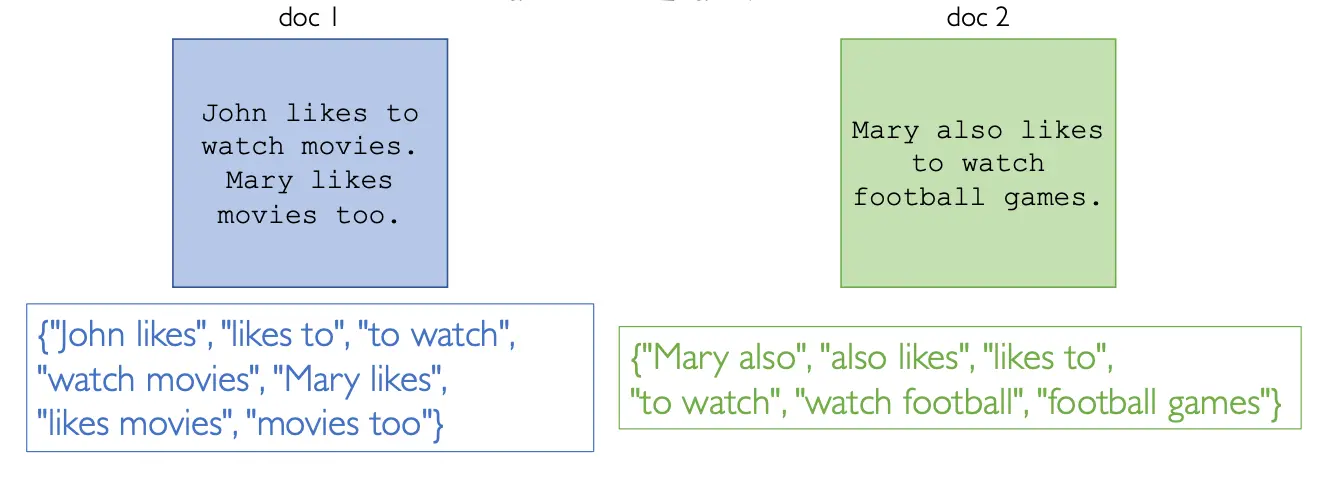

An example of bigrams ()

An example of bigrams ()

The is an hyperparameter, so in order to get the best value we can just try different s and see which is the best result. Typically the best is or .

The -gram splitting can also be used at the character level instead of word level, meaning the word is splitted into sequences of characters instead of words.