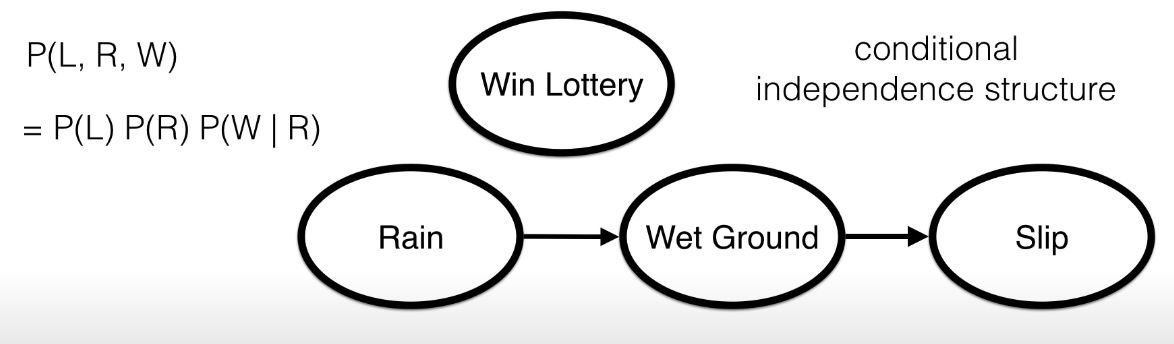

A Bayesian Network, also known as Directed Graphical Model is a particular DAG (Directed Acyclic Graph) in which each node is an event and the parent is the event on which it conditionally depends on.

In this Network we can see that the probability of (Slip) depends on (Wet ground) and (Rain), but (Win lottery) is completely independent from the others.

In this Network we can see that the probability of (Slip) depends on (Wet ground) and (Rain), but (Win lottery) is completely independent from the others.

Because of this relationship, we can trivially see why the graph has to be acyclic.

Given that, we can say that:

- Each variable is conditionally independent (definition of Conditional Independence) of its non-descendents given its parents.

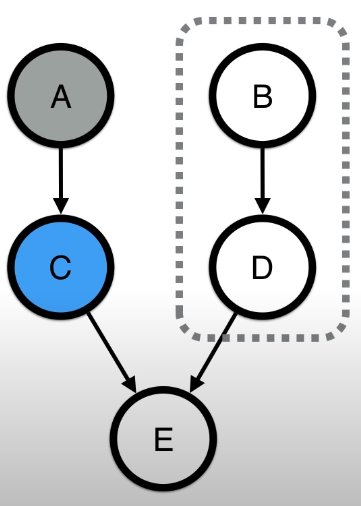

In this figure, if we consider the variable , and we observe , we can say that and are not influenced by .

In this figure, if we consider the variable , and we observe , we can say that and are not influenced by . Also here, is independent from and , since they are it non-descendents, and so it’s only dependent on its parent.

Also here, is independent from and , since they are it non-descendents, and so it’s only dependent on its parent.

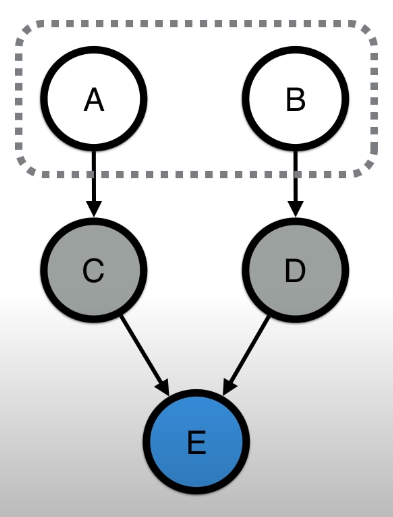

- Each variable is conditionally independent of any other variable given it Markov blanket. (Meaning that each other variable outside a variable’s Markov blanket doesn’t influence the variable.) This is very useful for efficiency.

Inference

Let’s see how, given a Bayesian Network that describes a joint probability we can compute the Marginal Probability (this is inference).

The most naive approach is just enumeration, meaning computing the exact marginal probability.

Let’s see an example with the first graph we have seen: From this, we want to compute (is it raining or not?)

In order to do so, we have to iterate over all the possibilities of all the other variables , which for a large graph with more than 3 variable is very expensive. A solution to this problem is to use variable elimination.

tags: probability-theory - graph-theory references: