The Curse of dimesionality is solved in the feature space with dimensionality reduction methods, such as Principal Component Analysis (PCA).

What PCA does is to transform the data by creating a new space in which the axis are the principal components of the data, where is the number of dimensions.

These principal components are the eigenvectors with the highest eigenvalues, this models the direction where the data variance is higher. The first PC is the one with the highest eigenvalue, the second is the one just under that and so on. A PC is just a linear combination of all the features.

We can compute all the principal component and by the definition of eigenvectors, every will be orthogonal to all the other ones before that. As said, the maximum number of PC is the maximum number of dimensions the data is described in.

To perform dimensionality reduction, it’s possible to remove some of the last principal components, since they are the axis that carries the least amount of information. A general idea is to keep the first principal components, where their cumulative proportion of variance, meaning the variance they express in relationship with the total variance, is 99%.

We then can project the data onto the new -dimensional space, by representing the data with new axis and by maintaining most of the information.

So, in general, PCA is not by itself a dimensionality reduction method, but a data transformation method. But since the new space is constructed by principal components that are ordered by the level of variance, it’s possible to delete some of the last one to reduce the number of features

-

Reconstruction: starting from the reduced representations , we want to obtain the original data points . Of course we won’t exactly get the same since we have lost some information.

PCA finds the principal components (rows of ) by maximising the sum of orthogonal projections of the data points, that is the same as maximising the variance.

Note

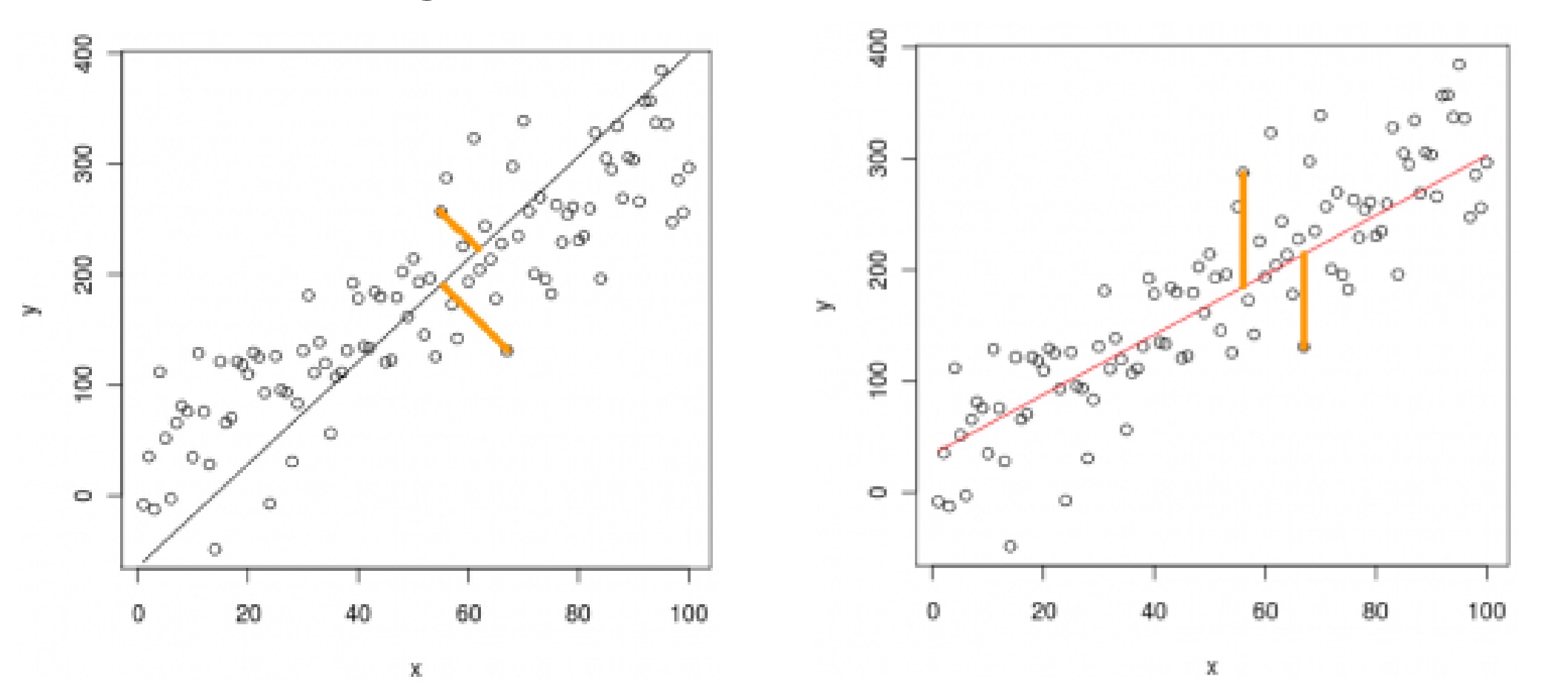

Note that this is fundamentally different from how logistic regression finds the line of best fit, and that the two like won’t be the same most of the time.

With linear regression we measure the error along the coordinate, with PCA we measure the error orthogonal to the principal direction.

Other uses of PCA:

tags: machine-learning