The belief is the estimated marginal probability of an event.

Let’s start with the first method for belief propagation, that is called the Sum-Product Messages

We can use a family of algorithm called belief propagation on graphs in order to do inference. In particular, we can do exact inference for graphs that are trees, and approximate inference for general graphs.

NOTE: The partition function is used to normalize the probabilities, and it’s needed in order to compute the marginal probability, and so do the inference. The problem is that the partition function has many terms inside, and so it’s not efficient at all. We can do better.

Sum-Product Algorithm

When a node is sending the message to one of it’s neighbour , it will send all the messages that neighbours have sent to it, excluding , because already has that information.

When computing the actual beliefs includes all its neighbour.

is the vector associated to the node . todo

Usually this method is implemented in a message update algorithm called loopy belief propagation, which is guaranteed to converge to correct marginals probabilities for each node, but it’s expensive.

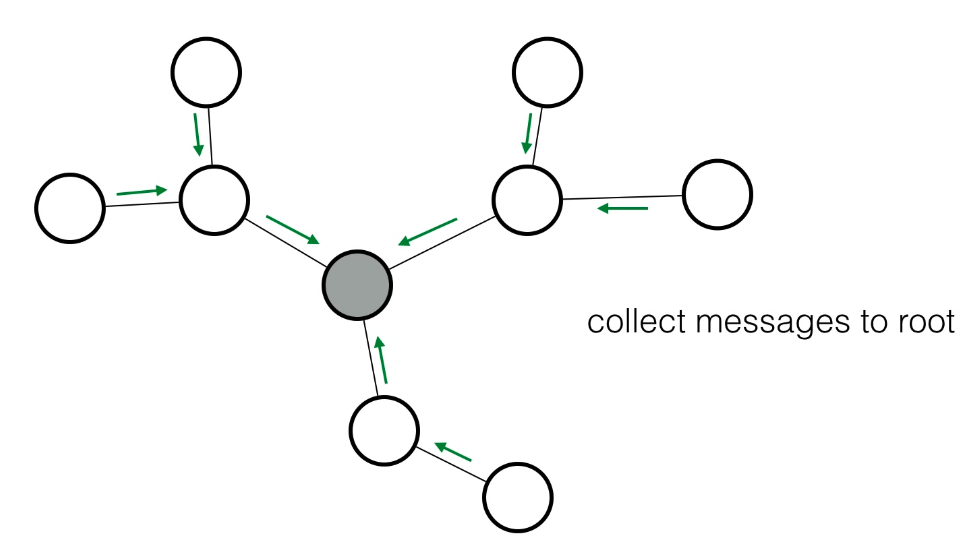

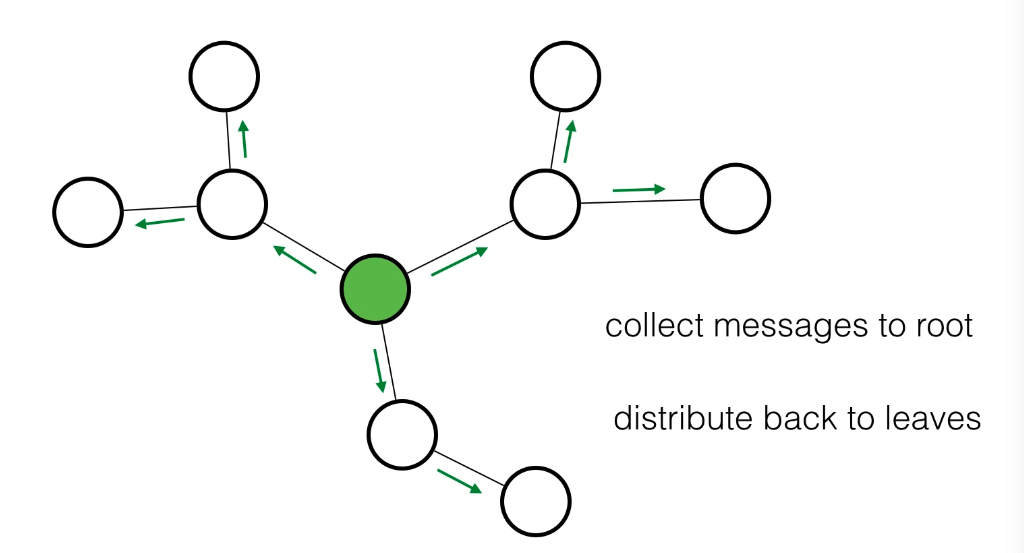

A more efficient algorithm is the collect and distribute schedule. It works in the following way:

- Collect all the messages that are going towards the root, and compute the figurative parents of the nodes that are sending those messages.

- The root collects all the messages, and distribute the information back to the leaves.

This algorithm, like loopy belief propagation is guaranteed to converge to marginals, but it’s way more efficient, since it takes a single update per message in order to converge (we traverse each edge just once to traverse, and once to distribute).

This algorithm, like loopy belief propagation is guaranteed to converge to marginals, but it’s way more efficient, since it takes a single update per message in order to converge (we traverse each edge just once to traverse, and once to distribute).

The guarantees are not so nice in non-trees structures. Note that if the graph is not a tree, we can choose any node as the root.

Collect and Distribute is the same as Forward-Backward algorithm in Bayes Networks.

Max-Sum algorithm

Similarly to the sum-product algorithm, we can use the max-sum algorithm in order to do inference for the MAP (Maximum a Posteriori).

tags: graph-theory - probability-theory resources: