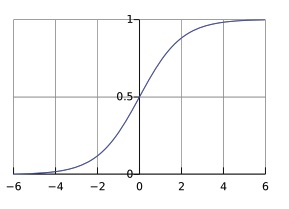

Logistic regression is a binary classifier used for categorical labels that builds on linear regression, but instead of predicting a continuous output, it applies the sigmoid function (specifically, the logistic sigmoid) to the linear output to produce a probability. This transformation maps any real-valued input to a value between 0 and 1, which allows logistic regression to output the probability that a given input belongs to a particular class.

As it happens for linear regression and polynomial regression, the logistic regression changes the parameters in order to minimize the loss. The parameters are the following:

- Coefficients for each feature in the input vector

- Intercept (or bias) which represents a constant offset in the decision boundary.

The logistic regression loss is defined as:

Where is the logistic sigmoid function

The sigmoid function is used to squash the output of into a value between . This means that the function has a saturation effect as it maps .

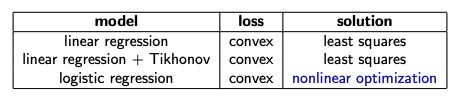

Note that this new loss is non-linear with respect to the parameters, since they appear in the non-linear sigmoid function, and it’s also non-convex.

We want the loss to be convex in order to perform Gradient Descent, so we can write a new loss function with this property that will output an high value if the output label is wrong, and a low value if it’s true.

This works well because if the prediction is near the , and the true label is , then . On the other hand, if the true label is , then .

We can rewrite the function on a single line:

The function is still non-linear with respect to the parameter, but is convex. This is known as Binary Cross Entropy Loss.

The loss for the entire dataset will be:

Closed form solution for Logistic Regression

As we did for the linear regression case, we want to find a closed form solution by putting the gradient of the loss equal to zero. Since the loss is convex, we will find the global minimum.

Math

Finding theta such as

We set the gradient to zero and we want to solve it for .

Since the gradient operation is linear, the gradient of the summation is just the sum of the gradient of each term, so we can consider just the gradient of a single term.

We can apply the chain rule in order to take the derivative of the composition of three function.

We solve the first partial derivative:

We solve the second partial derivative:

Finally we solve the third partial derivative:

Plugging everything in the equation at :

And so:

Now this should be done also for the left ther of , and everything should be repeated w.r.t. in order to compute the full gradient.

We can see from a part of the partial derivative which constitutes the gradient:

that the system of equations that we have when we set the gradient to wouldn’t be a linear system, since both and are involved in a non-linear function and so it cannot be easily solved.

Furthermore, the system is a transcendental equation, because it involves the exponential function inside the sigmoid function, which definition is the sum of an infinite series. Because of that, an analytical solution, and so a closed form solution, doesn’t exist.

In order to find the parameters that minimize the loss, we need to use an iterative method like stochastic gradient descent.

Multinomial Logistic Regression

If the output label are not binary, we want to perform multi-class classification, and so we need multinomial logistic regression.

The cost function for more than 2 classes translates to:

Where can be substituted with the softmax function, and so the final loss function becomes:

Where:

- is the feature vector

- is the number of classes

- is the weighted vector for class

- is the indicator function, which returns if (the true class for is j), and otherwise.

Note

The softmax function is used instead of the sigmoid since the first can squash into the interval multiple values, considering them all in the process, while the latter only works with two values independently. Using the sigmoid won’t return a vector that sums to 1, hence not a probability distribution.

tags: machine-learning